AI-Powered Affiliate Content Personalization at Scale (Without Creepy Tracking)

AI-powered affiliate content personalization at scale (without turning your site into a creepy slot machine)

I keep seeing affiliate programs built like a junk drawer—everything tossed in, nothing labeled, and somehow we’re surprised when it jams.

Now we’re doing the same thing with “AI personalization.” We sprinkle a model on top, swap a few CTAs, and call it sophisticated. Then revenue wiggles, partners complain, and nobody can explain why.

My thesis is boring: personalization is an incentives + measurement problem first, and a tooling problem second. If you can’t audit the logic, respect consent, and measure lift, you’re not personalizing—you’re just adding randomness with better vocabulary.

If you want a practical outcome from this post, it’s this: you’ll be able to build a repeatable, consent-aware personalization system for affiliate content—modules, tracking, tests, governance—without rewriting 500 pages by hand.

Define the thing: what “personalization” means for affiliate content (and what it doesn’t)

Personalization gets talked about like it’s one thing. It isn’t.

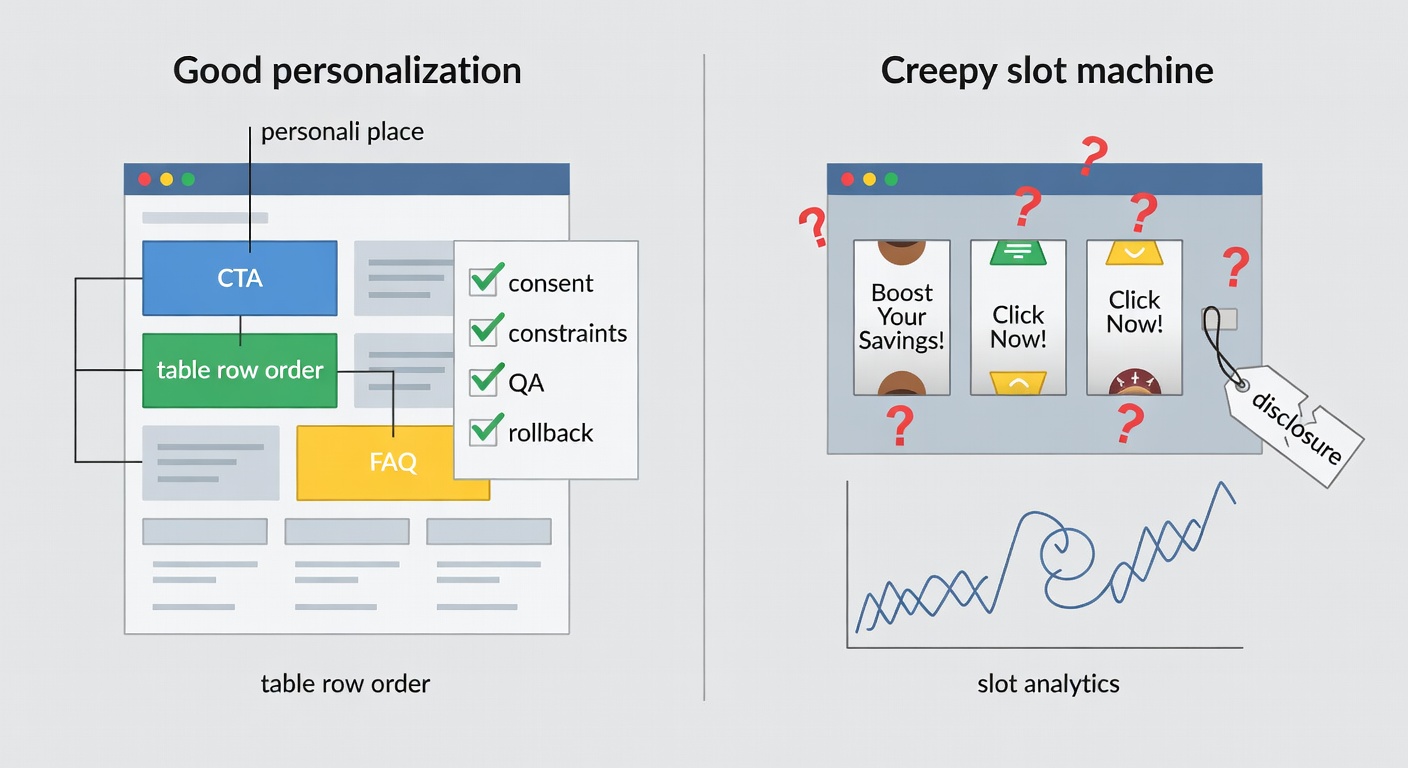

Personalization (for affiliate content) = changing specific page elements based on allowed signals to improve decisions and conversions. Not “rewrite the whole article for every visitor.” That’s a QA nightmare and, honestly, usually unnecessary.

Here’s the taxonomy I use when I’m trying to keep teams honest:

- Content modules (tables, callouts, FAQs, proof blocks)

- Offer selection (which merchant/product gets recommended)

- Messaging/positioning (price vs. speed vs. risk vs. simplicity)

- UX routing (TOC order, jump links, “start here” paths)

And here’s what it doesn’t mean: stalking people around the internet and guessing their life story from a browser fingerprint. That era is brittle and increasingly restricted by platform and regulatory pressure (and it deserved to be brittle, if I’m being blunt) (Usercentrics).

Personalization layers you can actually ship: modules, not magic

If you want scale, you need a unit of scale.

Modules are the unit. Swap a “Best for budget” callout. Reorder a comparison table. Change a CTA from “Check price” to “See compatibility.” Add a region-specific pricing note. That’s shippable.

Full-page AI rewrites are brittle because they multiply review surface area. Every variant becomes a new page you have to fact-check, disclose, and keep current.

Natural language generation (NLG) can help—but I like it best when it’s constrained to structured inputs (your product database, your eligibility rules, your pricing fields) and used to narrate differences, not invent them. That’s basically what NLG is for: translating structured data into readable text (Yellowfin).

Small note: if your inputs are messy, your outputs will be confidently messy. AI doesn’t fix data hygiene. It just makes the mess faster.

If you only remember one thing from this section, make it this.

Personalize blocks. Not entire articles.

The affiliate-specific twist: you’re personalizing recommendations, not just copy

Affiliate personalization isn’t just “better UX.” It changes who gets paid.

When you personalize recommendations, you’re changing:

- merchant distribution (and partner relationships)

- compliance exposure (claims, disclosures, pricing accuracy)

- incentives (which partners you’re rewarding for which behavior)

That’s why I’m obsessive about an audit trail. If your top partner’s EPC drops 30% and you can’t answer “what changed,” you’re going to end up telling stories instead of doing diagnosis.

And yeah—this is where last-click gravity can get weird. Anyway—back to the funnel.

Section closer: What behavior are you paying for—discovery, persuasion, or checkout interception?

Start with first-party data (because third-party assumptions are getting worse, not better)

As of 2026-02-08, my read is that first-party data is the only durable foundation for personalization that won’t collapse the next time a browser or platform tightens the screws.

Usercentrics frames this cleanly: first-party personalization is based on data users generate directly with you (browse, buy, preferences), and it’s stronger when it’s transparent and consent-led (Usercentrics).

Third-party data? Often inferred, opaque, and increasingly unreliable. Even when it “works,” it’s hard to defend.

What first-party signals are useful for affiliate personalization (and low-risk)

You don’t need a surveillance rig. You need a few high-signal inputs.

Here’s my prioritized list for affiliate content personalization:

- Page intent (query class / page type: “best,” “vs,” “review,” “alternatives”)

- On-site behavior (scroll depth, table interactions, filter clicks)

- Declared preferences (quiz/poll answers—only if you can explain the benefit)

- Geo/currency (country, currency display, availability)

- Device (mobile vs desktop changes what “friction” means)

- Returning vs new (continuity modules)

- Email click context (campaign/subscriber segment that brought them in)

Progressive profiling matters here: collect less upfront, earn the right to ask for more later. Usercentrics calls this out directly as a practical strategy (Usercentrics).

Boring. Effective.

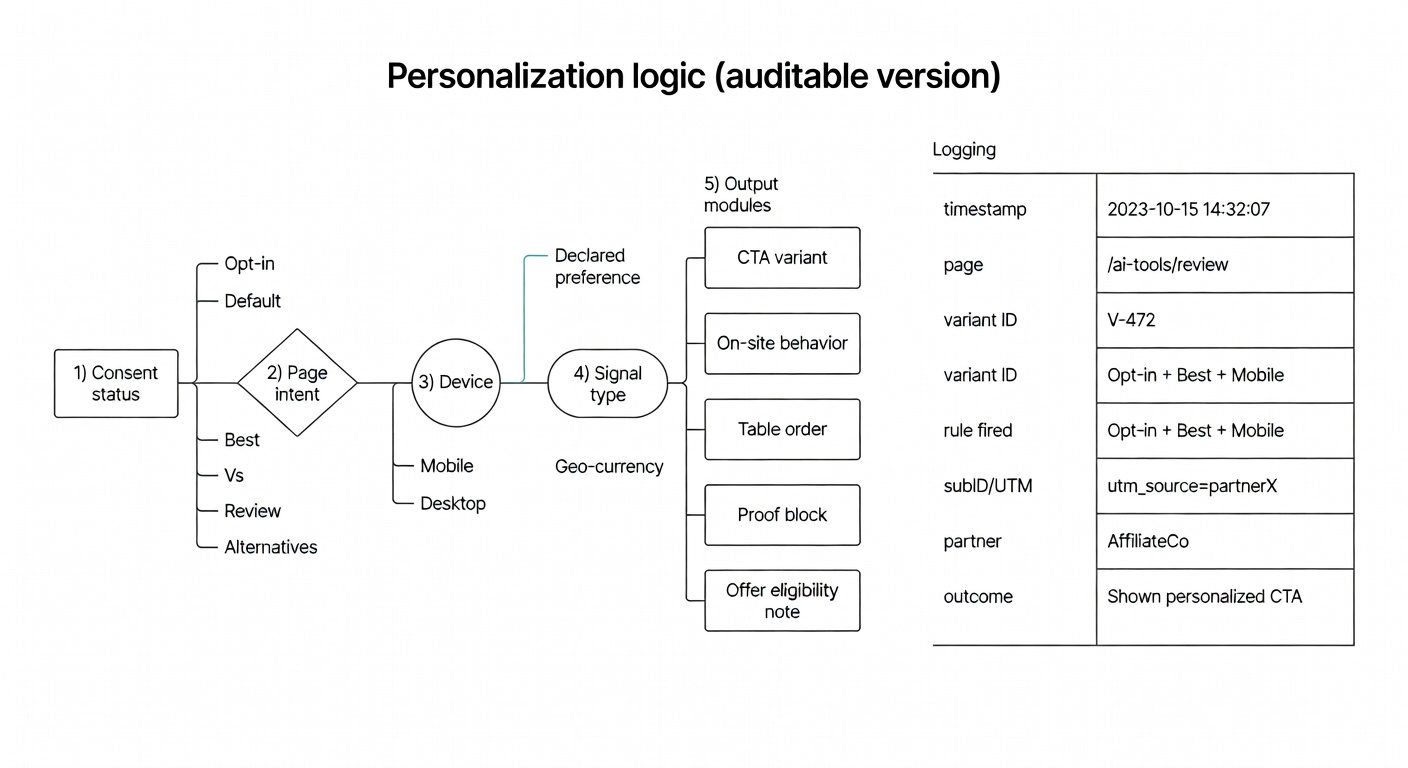

Consent isn’t optional just because the data is ‘yours’

This is the part that makes me twitchy.

First-party data isn’t a free pass. GDPR and the ePrivacy Directive still apply; what matters is your legal basis and whether consent is required for the processing you’re doing (Usercentrics).

Usercentrics is blunt about it: “First-party data without consent is still a risk.” And when consent is your basis, it has to be freely given, specific, informed, and unambiguous (Usercentrics).

So operationally:

- Segment by consent status

- Build a strong default experience (contextual, non-creepy)

- Offer an enhanced experience for opt-ins (behavioral/declared)

No tracking. No trust.

Section closer: Are your disclosures clear enough that you’d feel fine showing them to a regulator—or your mom?

The scale problem: how to personalize 500 pages without breaking trust (or your QA budget)

Most “AI affiliate content” advice is basically: “write more, faster.”

Cool. But scale breaks in the same place every time: governance.

If you want personalization at scale, you need an operating system:

- templates

- constraints

- review rules

- logging

- rollback ability

Honestly, I’m relieved when a tactic is boring.

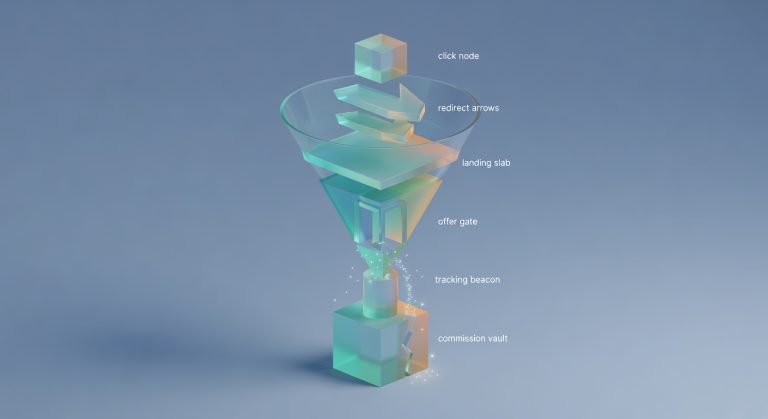

Build a content template system with swappable blocks

The real scaling lever isn’t AI. It’s structure.

A workable architecture looks like this:

- Structured dataset (products/merchants, pricing fields, eligibility, regions, pros/cons, update timestamps)

- Rendering layer (your CMS/template system)

- Block library (comparison rows, “best for” callouts, proof modules, pricing notes, FAQs, CTAs)

- Personalization rules (segment → block variant)

Then, optionally, NLG to narrate structured differences (“If you travel internationally, Plan B supports multi-currency payouts…”). That’s aligned with how NLG is typically described: scanning structured data and translating it into readable narrative (Yellowfin).

That’s true—well, true in most verticals.

Guardrails for AI-generated variants (so you don’t publish confident nonsense)

If you’re going to generate copy variants, put guardrails in writing.

My baseline set:

- Retrieval from your own dataset (don’t let the model “remember” pricing)

- Banned-claims list (no “guaranteed,” no medical/legal promises, no made-up specs)

- Required disclosure block (affiliate disclosure placement isn’t optional)

- “Unknown” fallback copy (“Pricing varies by region; check current price”)

- Human review triggers (money/health/legal categories, or any time you change recommendation logic)

Usercentrics’ framing helps here: privacy-led personalization is about transparency, choice, control, and accountability—those are governance principles, not vibes (Usercentrics).

A change log for personalization (yes, like a lab notebook)

I keep a change log for every site. It’s not glamorous. It saves my sanity.

Log entries should include:

- segment definitions (what qualifies a user)

- which blocks changed (and where)

- recommendation ranking logic changes

- rollout dates and traffic split

- expected KPI impact (what you thought would happen)

Why? Because Google Optimize is gone, stacks are more fragmented, and you’ll end up stitching insights across tools. CXL notes Optimize sunset on Sept 30, 2023, which forced teams to pick alternatives (CXL).

Okay—now let’s look at what breaks when you scale it.

Section closer: If you’re stuck, start with the funnel map and a change log. Future-you will thank you.

Tracking and attribution: if you can’t measure it, personalization is just vibes

Personalization multiplies variants. Variants multiply attribution confusion.

So before you get fancy: instrument the basics under your own domain, as much as you reasonably can.

A Reddit thread on first-party tracking for stores captures the practical reality: teams are moving toward server-side/first-party approaches (Stape, Elevar, Segment get name-checked), but cost/complexity is real, and many start by making their own store data the source of truth rather than trusting ad platforms (r/woocommerce).

Minimum viable instrumentation for personalized affiliate pages

Let’s be specific:

Capture these events (first-party if possible):

variant_id(what did they see?)segment_id(why did they see it?)module_impression(did the block load/enter viewport?)outbound_clickwithmerchant_id+link_id(and subID/UTM)- downstream conversion mapping where possible (network reports, postbacks, or at least proxy metrics)

And yes—this is annoying. But it’s cheaper than arguing with a reversal report later.

Source-wise, the first-party tracking thread is a decent practitioner snapshot and includes tool options and tradeoffs (r/woocommerce).

Server-side / first-party tracking options (and when they’re worth the pain)

Decision rule I use:

- Small publisher / low volume: clean UTMs + subIDs + on-site events first

- Scaling spend / need better match rates: consider server-side tagging / first-party tracking

Stape and Elevar come up as common options in practitioner discussions, with Elevar flagged as expensive/technical for smaller teams (r/woocommerce).

This is also where networks get blamed for problems that start in your own setup.

Section closer: Run the boring checks first. They catch the expensive problems.

Experimentation: how to A/B test personalization without lying to yourself

Personalization is seductive because it always “makes sense.” That’s the trap.

The test question isn’t “is this more relevant?” The test question is: does it increase incremental revenue (or EPC) without harming trust metrics?

And remember: Google Optimize is sunset. CXL calls that out and lists alternatives across budgets and complexity (CXL).

Test the module, not the whole page (most of the time)

Most of the time, page-level tests are too confounded. Too many moving parts.

Instead, test modules:

- CTA copy + placement

- table row ordering

- “best for” logic (segment → recommended item)

- proof block placement (reviews, guarantees, shipping notes)

- pricing display format (range vs exact, “from $X” vs “$X/mo”)

Conversion Sciences has a warning I wish more affiliate folks would tattoo on their forearm: companies buy enterprise tools and burn their CRO budget because they don’t have the process/skills to use them well (Conversion Sciences).

Exactly.

Tooling reality check: Optimize is gone; pick tools that match your skills

CXL’s list is a good starting point for post-Optimize tooling—AB Tasty, Adobe Target, Optimizely, VWO, Convert, etc. (CXL). Conversion Sciences also lists commonly recommended tools and reinforces the “fit matters” point (Conversion Sciences).

My selection criteria is unsexy:

- do you have engineering support?

- do you have enough traffic for clean reads?

- do you need server-side testing or is client-side fine?

- can you segment by consent status?

If you disagree, I’m open to it—just show me what you’re measuring.

Section closer: I’d rather you ship one clean test than ten messy partnerships you can’t explain.

Personalization strategies that work for affiliate content (with failure modes)

This is where people get burned.

Below are plays I’ve seen hold up—plus how they fail, because they always fail somehow.

Intent-based personalization: route by page type and query class

When to use: always. It’s contextual and low-creep.

Map intent classes:

- “best” → comparison table early, “best for” callouts, decision shortcuts

- “vs” → side-by-side differences, “choose A if…” logic

- “review” → proof, caveats, who it’s not for

- “alternatives” → switching costs, compatibility, migration steps

- “coupon” → be careful (you’re inviting last-click parasites)

Failure mode: you optimize for CTR and accidentally send unqualified clicks that don’t convert (or convert at lower AOV).

How to measure: outbound CTR + downstream conversion proxy + EPC by intent class.

A Reddit thread on contextual vs interest-based ads echoes the pattern: contextual tends to perform well for cold traffic, while interest-based can be better for nurturing/retargeting (r/AgencyGrowthHacks). Different channel, same idea: context is often the cleanest signal.

Section closer: What’s the simplest explanation for the performance change you’re seeing?

Geo/currency personalization: the boring win (and usually compliant if done right)

When to use: if you have international traffic, or merchants vary by region.

Show:

- local currency display

- region availability

- payout methods / shipping constraints

- region-specific merchants (don’t recommend what they can’t buy)

Tapfiliate gets mentioned by publishers as supporting multi-currency payouts—useful context for global audiences (r/Affiliatemarketing).

Failure mode: you show pricing that’s stale or region-inaccurate and lose trust fast.

How to measure: CVR/EPC by geo + “price mismatch” support/contact events (if you track them).

Section closer: The win isn’t a spike. The win is a system you can trust next month.

Returning visitor personalization: “continue where you left off” beats “we know who you are”

When to use: when your category has a messy middle (most do).

Lightweight continuity modules:

- “Last compared” items

- “Your shortlist” (with an obvious reset)

- “Still deciding?” email capture with a clear value exchange

Failure mode: it feels creepy if you surface too much detail without explaining why it’s there.

How to measure: return rate → outbound click rate, time-to-decision, email opt-in rate.

Usercentrics’ pillars—transparency, choice, control, accountability—are basically your UX checklist for not being weird about this (Usercentrics).

Section closer: You can’t harvest trust you didn’t plant.

Consent-status segmentation: personalize for opt-ins, keep a solid default for everyone else

When to use: immediately, if you’re doing anything beyond contextual.

Build two experiences:

- Baseline: contextual modules, no behavioral profiling

- Enhanced: behavioral/declared personalization for opt-ins

Usercentrics explicitly recommends segmenting by consent status and warns against assuming silence equals consent (Usercentrics).

Failure mode: performance collapses when opt-out rates rise because your baseline experience is weak.

How to measure: KPI split by consent status + opt-in rate + opt-out rate.

Section closer: Pay for value creation, not value capture.

Affiliate program and platform implications (publishers notice when your stack is annoying)

This is a tangent, but it matters.

If you’re a brand building an affiliate program while also trying to personalize content, remember: publishers are your distribution. If your platform creates payout distrust, you’ll attract the wrong partners—or none.

If you’re a brand: choose affiliate software that doesn’t create payout distrust

A thread asking publishers what they prefer is basically a list of “please don’t make this painful.”

Highlights:

- PartnerStack: praised for transparent dashboards and 30-day payments; integrates with Stripe (r/Affiliatemarketing)

- Tapfiliate: noted for no-code setup and multi-currency payouts (r/Affiliatemarketing)

- Warnings: avoid manual approval for withdrawals (Refersion mentioned) and clunky UIs (HasOffers mentioned) (r/Affiliatemarketing)

If you’re asking “why won’t good publishers join?” start here.

Section closer: That’s the whole point: make the incentives obvious, then make them fair.

Fraud and leakage: personalization can amplify the wrong partners if you don’t police it

Higher-converting pages attract attention. Not all of it is friendly.

If your personalized pages convert well, coupon/toolbar partners may try to intercept at the last click. And then you’ll pay them for “value” they didn’t create. (Mini-rant: I still don’t love how opaque some networks can be about this.)

Affise gets a nod for fraud detection in that same publisher thread (r/Affiliatemarketing).

Watch:

- reversal rates by partner

- subID patterns (sudden spikes near checkout)

- partner mix shifts after personalization rollouts

Section closer: If your top partner vanished next week, what would your revenue graph do?

A practical rollout plan (so you don’t try to boil the ocean)

Here’s the version that’s boring, repeatable, and easier to audit.

Day 0–30: baseline instrumentation + one modular test

- Pick one template (comparison page is usually best)

- Define 2–3 segments (intent class + geo is plenty)

- Implement events:

segment_id,variant_id,outbound_click, module impressions - Ship one module test (CTA or table ordering)

- Success metric: EPC proxy (click → conversion rate if you have it) or downstream conversion where possible

Remember: Optimize is gone, so plan your testing tool choice accordingly (CXL).

Stop condition: if tracking is inconsistent, pause. Fix tracking. Then test.

Day 31–60: consent-aware personalization + progressive profiling

- Implement purpose-based consent and enforce it downstream (Usercentrics)

- Build baseline vs enhanced experiences (consent-status segmentation)

- Add one declared-preference input (quiz/poll) only if you can explain the benefit

My read is that progressive profiling beats “ask for everything” almost every time (Usercentrics).

Day 61–90: scale via templates + governance (and keep the change log updated)

- Roll out by template, not page-by-page

- Add QA checks for pricing/claims/disclosures

- Set KPI alerts (segment-level regressions)

- Keep the change log current

- Don’t buy tooling that outpaces your process (Conversion Sciences’ warning is real) (Conversion Sciences)

Try it on one offer, one partner type, one traffic source. Then scale what holds up.

Common traps (the ones that make me twitchy)

Trap: buying an enterprise testing tool before you have a testing process

Conversion Sciences quotes Paul Rouke on companies signing multi-year contracts and burning their CRO budget because they don’t have the skills/process to use the tool well (Conversion Sciences).

I’ve seen this movie. It ends with “we tried testing and it didn’t work.”

Trap: treating first-party data as a free pass

Usercentrics is explicit: GDPR doesn’t care whether the data is first-party or third-party; it cares about legal basis, consent where required, and respecting user choices (Usercentrics).

Silence is not consent. Pre-checked boxes are not consent. And “it’s our data” is not a legal argument.

Don’t skip this step.

What I’d measure (and what I’d ignore) for personalized affiliate content

I’m not pretending this is the only way—just the one that survives my stress test.

Primary metrics (per segment × variant × merchant):

- outbound CTR (but don’t worship it)

- EPC proxy (click → conversion where available)

- revenue per session (if you can map it cleanly)

Guardrails:

- bounce rate / pogo-sticking back to SERP (signal of mismatch)

- time-to-decision (sometimes shorter is better; sometimes it means confusion)

- disclosure interaction (if it spikes, something feels off)

The practitioner thread on first-party tracking is a reminder: your own on-site + order data should be the source of truth, not just what ad platforms report (r/woocommerce).

The minimum dashboard: segment × module × merchant

If you build nothing else, build this pivot:

consent_statussegment_idmodule_variantmerchant_id- impressions, clicks, CTR

- conversions/revenue (if available)

You don’t need more tactics; you need fewer unknowns.

FAQ: quick answers for the questions people ask right before they ship something risky

Is this allowed under GDPR?

It depends (sorry). But first-party personalization still needs a valid legal basis, and consent must be valid when it’s required—freely given, specific, informed, unambiguous (Usercentrics).

Do I need server-side tracking?

Not always. Start with clean UTMs/subIDs and first-party event capture; move to server-side when spend/complexity justifies it. Tools like Stape/Elevar/Segment come up in practitioner discussions, with cost/complexity tradeoffs (r/woocommerce).

Will AI content get penalized?

I’m not going to pretend I can predict algorithm enforcement. My operational take: the risk isn’t “AI,” it’s publishing unverified claims. Use structured inputs, guardrails, and human review for sensitive categories.

How many variants is too many?

When you can’t explain performance changes without hand-waving. Start with 2–3 variants per module, per segment. Earn complexity.

Next step (if you want this to actually ship)

Pick one high-intent template (a “best X” or “X vs Y” page). Build one swappable module. Instrument it with segment_id + variant_id. Run one test.

Then write a change log entry like you’re going to have to defend it in a finance review.

If you want a north star here, it’s this: pay for value creation, not value capture.

Run the boring checks first. They catch the expensive problems.

Sources

- Usercentrics — How To Collect And Use First-Party Data Personalization

- Yellowfin — What is Natural Language Generation?

- CXL — 25 of the Best A/B Testing Tools for 2025

- Conversion Sciences — Most Recommended AB Testing Tools (2024 Update)

- Reddit r/woocommerce — Anyone using first-party tracking to improve ad performance?

- Reddit r/Affiliatemarketing — Which affiliate service do publishers prefer?

- Reddit r/AgencyGrowthHacks — Contextual ads vs interest-based targeting (2025)