Influencer Marketplace + Affiliate Platforms: Performance Analytics That Actually Hold Up

Influencer‑Marketplace Hybrid Platforms with Performance Analytics: What Actually Matters (and What Breaks)

TL;DR

Influencer-marketplace “hybrid” platforms only work when they cover three layers—marketplace mechanics, workflow ops, and performance analytics—with an auditable attribution trail. Dashboards are easy; reconcilable, cross-platform measurement (including refunds, dedupe rules, and exports) is what determines whether you’re driving incremental growth or just paying for demand capture.

Key takeaways

- A real hybrid = marketplace + workflow + analytics. If a tool can’t connect creator intake, tracking assets (codes/links), and payouts to order-level outcomes, you don’t have a system—you have a UI.

- Inbound marketplaces change your creator mix. “Creators who apply” aren’t always “creators who convert,” so outbound sourcing is often necessary to control audience fit and category credibility.

- Workflow is the scaling constraint for micro/nano. Time-to-first-post, consistent naming/mapping, and payout reliability matter more than “creator discovery” once volume ramps.

- Attribution transparency beats attribution claims. You need clear windows, dedupe rules (code vs link), and raw exports that reconcile with Shopify/finance/ads—otherwise “ROI” becomes a black box.

- Don’t mix ROAS, ROI, and incrementality. ROAS/ROI are attribution-based; incremental lift requires testing/holdouts, not just a dashboard number.

- Dual-track (code + link) helps—until it doesn’t. It improves coverage across devices/apps, but without explicit precedence and tie-breakers it creates double-crediting, leakage, and commission inflation.

- Refunds, reversals, and fraud controls are non-negotiable. If the platform can’t net out refunds/chargebacks and detect self-referrals, your profitability reporting is fiction.

I keep seeing affiliate programs built like a junk drawer—everything tossed in, nothing labeled, and somehow we’re surprised when it jams.

Now swap “affiliate program” for “creator marketplace.” Same mess, newer UI.

The promise is speed: creators on tap, briefs in templates, payouts automated, dashboards everywhere. The problem is the analytics often can’t answer the questions performance teams actually care about—incremental lift, cross-platform journeys, and whether you just paid for checkout interception with a prettier name. Social Native calls out the shift pretty plainly: sophisticated brands are measuring creator partnerships by CAC, ROAS, and revenue contribution—not just reach and engagement (Social Native). And once you start measuring that way, you need an audit trail, not vibes.

So here’s what we’re doing: define the hybrid category, map the workflow, then stress-test the analytics and incentives. And yes—talk about what breaks.

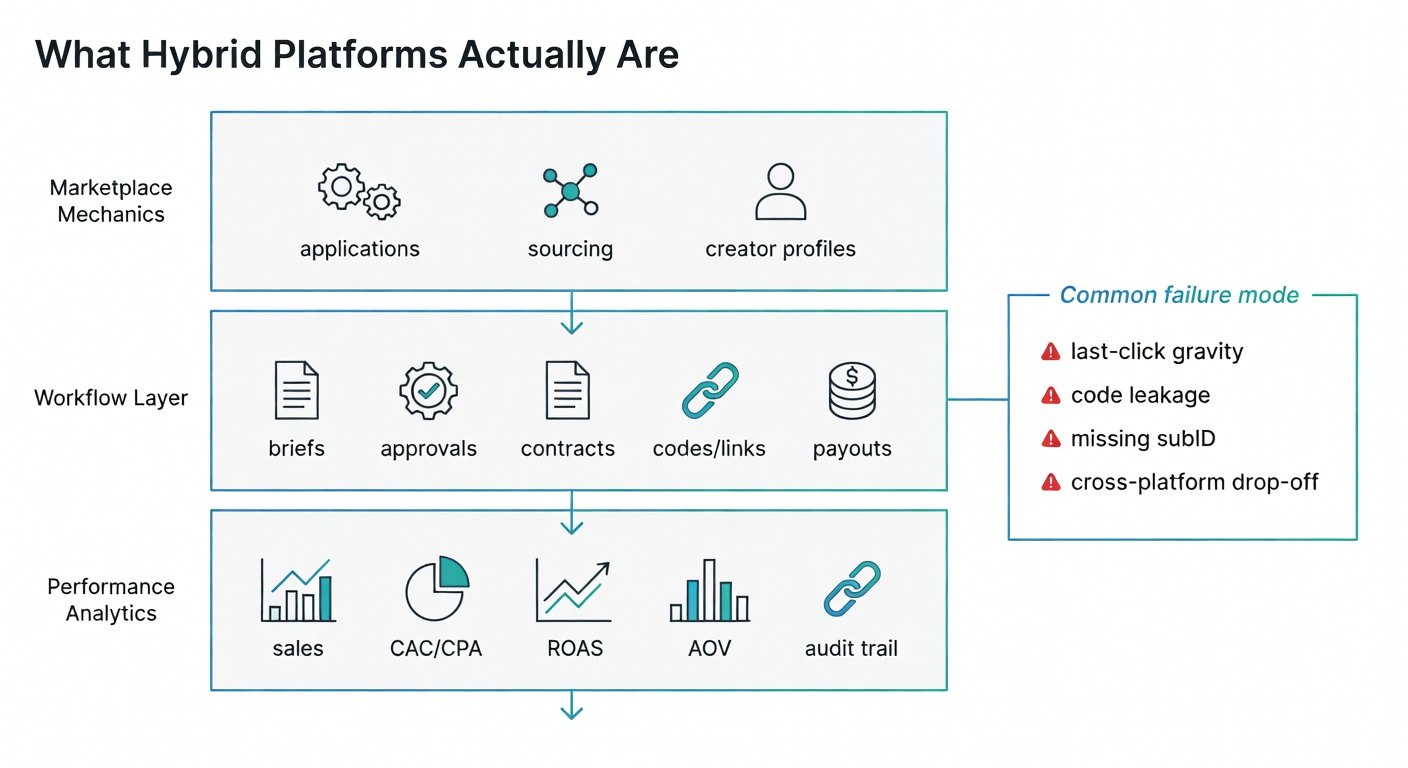

Define the category: “marketplace + workflow + performance analytics” (not just a creator database)

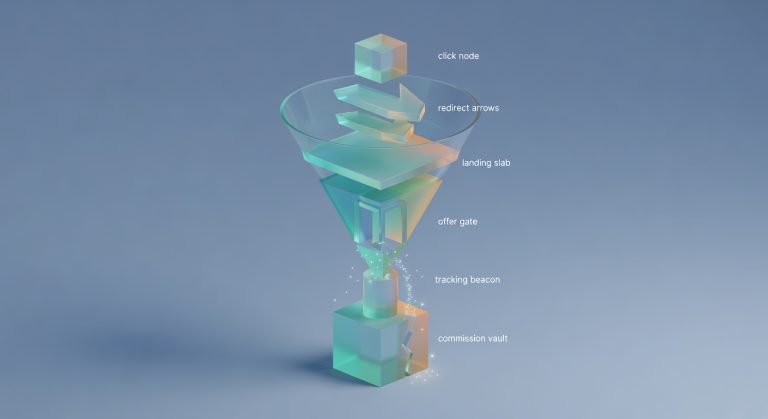

A hybrid platform is three things at once:

- Marketplace mechanics: creators can apply or be recruited at scale (not just “search profiles”). Upfluence, for example, positions its Marketplace around creator applications, approvals, and rapid payments (Upfluence).

- Workflow layer: briefs, approvals, contracts, codes/links, commission logic, payouts. The unsexy stuff.

- Performance analytics: reporting that ties creator activity to business outcomes (sales, CAC/CPA proxies, AOV, ROI/ROAS), not just clicks and views (Upfluence).

Dashboards are easy.

Features & Specs

| Layer | Key capabilities (1–2 examples) | What “good” looks like | Common failure modes |

|---|---|---|---|

| Marketplace mechanics Creator discovery + recruitment at scale |

|

|

|

| Workflow layer Ops primitives that determine scale |

|

|

|

| Performance analytics Business-outcome measurement |

|

|

|

Attribution is the hard part.

Marketplace mechanics: inbound applications vs outbound sourcing (and why it changes your partner mix)

Inbound marketplaces (“let creators come to you”) feel efficient because they are. Upfluence literally markets “no manual discovery or outreach” and “one-click creator applications” (Upfluence).

Here’s the catch.

Inbound tends to bias your partner mix toward creators who are good at applying—fast responders, template-fillers, people who live inside platforms. That’s not automatically bad. But it changes the selection pressure. And selection pressure is destiny.

Outbound sourcing is slower, but you can be more deliberate about audience overlap, content format fit, and whether the creator has ever moved a product in your category (not just posted about it once).

Okay—now let’s look at what breaks when you scale it.

Workflow layer: approvals, contracts, promo codes, payouts (the unsexy stuff that determines scale)

If you want micro/nano volume, workflow is the product.

Upfluence highlights contract templates, automated promo code generation, and paying creators via PayPal or Stripe (Upfluence). Rewardful—more affiliate-ops than creator marketplace—leans into the backend primitives: coupon code tracking, PayPal/Wise mass payouts, automated refund handling, and self-referral fraud detection (Rewardful).

My read is: hybrids win when they reduce “time to first post” without creating tracking debt.

No tracking. No trust.

If you only remember one thing from this section, make it this.

Analytics layer: dashboards are easy; attribution is the hard part

Most platforms can show you:

- clicks

- views

- CTR

- “engagement rate”

- attributed revenue (sometimes)

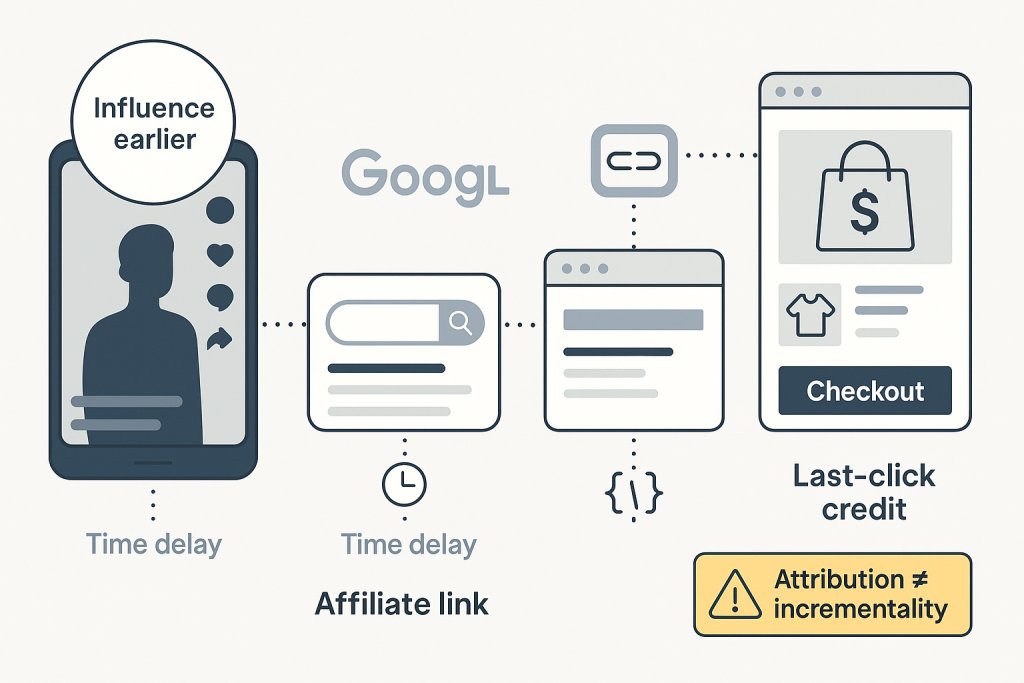

But cross-platform journeys are where teams get burned. SalesHub spells it out: last-click attribution can give zero credit to the influencer touchpoint that started the journey, especially when people see a TikTok, then Google you, then buy later (SalesHub).

And yes, influencer ROI measurement gets messy fast—Impact.com even frames it as an attribution challenge problem, not a “did we get likes?” problem (impact.com).

Alright, now we can talk tactics without lying to ourselves.

Why these hybrids are showing up now: performance teams took the budget (and they want audit trails)

Creator marketing is getting pulled into performance standards. Social Native says the shift is toward CAC/ROAS/revenue contribution, enabled by better attribution infrastructure and integrations with platforms like Meta, TikTok, and Shopify (Social Native).

That’s the reason hybrids exist.

Not because we needed another creator search tool. Because growth teams want something they can defend in a finance review.

And if you’ve ever had to explain a commission spike with no incremental lift… you get it.

ROAS vs ROI vs “incremental lift” (the definitions people keep mixing)

Let me back up and define what I mean by that.

- ROAS: ad spend vs direct sales. Narrow. Useful. Incomplete. Impact.com explicitly distinguishes ROAS from ROI this way (impact.com).

- ROI: broader business value. Impact.com gives the formula:

ROI = (Revenue generated – campaign cost) / Campaign cost × 100 (impact.com). - Incremental lift: the delta you wouldn’t have gotten anyway. This is the part most platform dashboards don’t prove; they mostly “attribute,” they don’t “incrementality-test.” (If your data is cleaner than mine, you may not feel this pain.)

Let’s separate ‘more sales’ from ‘more incremental sales,’ because those are not the same thing.

Comparison: A tight definitions table with columns: metric, formula/definition, what it answers, what it misses, and typical misuse in creator dashboards. Include the ROI formula shown in text and a note that incrementality requires testing/holdouts rather than attribution alone.

| Metric | Formula / definition | What it answers | What it misses | Typical misuse in creator dashboards |

|---|---|---|---|---|

| ROAS (Return on Ad Spend) |

ROAS = Attributed revenue ÷ Spend Spend usually includes creator fees + commissions + paid amplification (if counted). “Attributed revenue” depends on the platform’s attribution rules (last-click, code-based, etc.). |

“For each $1 we spent on this creator/program, how much revenue did the dashboard attribute back?” | Profit/margin, refunds/returns, COGS, shipping, and whether the revenue would have happened anyway (incrementality). Also sensitive to attribution windows and cross-device/cross-platform journeys. | Treated as “true performance” even when it’s just attributed revenue; compared across creators without normalizing for attribution method (codes vs links vs view-through); used to justify spend while ignoring margin and returns. |

| ROI (Return on Investment) |

ROI = (Revenue − Cost) ÷ Cost Where “Cost” should include all-in program costs (fees, commissions, product seeding, agency/platform fees, paid usage rights, etc.). Ideally “Revenue” is incremental revenue, not merely attributed. |

“Did we make money after accounting for costs?” (a profitability framing, not just a revenue multiple). | Still not automatically incremental; can be distorted by how you define revenue (gross vs net), timing (cohort payback), and whether you include COGS/margin vs just marketing costs. | Calculated off gross revenue while excluding key costs (COGS, refunds, platform fees), then presented as “profitability”; mixed with ROAS as if they’re interchangeable; used as a single-period scorecard for programs with delayed payback. |

| Incremental lift (Incrementality) |

Incremental lift = (Outcome in exposed group − Outcome in control/holdout group) Measured via experiments (geo tests, audience holdouts, matched markets, randomized splits) or credible quasi-experiments. Note: incrementality requires testing/holdouts rather than attribution alone. |

“What did this creator/program cause that would not have happened otherwise?” (causal impact). | Harder to run and interpret; requires careful design, sufficient sample size, and time. Doesn’t automatically tell you who to credit inside the exposed group without additional instrumentation. | Labeled as “incremental” when it’s actually last-click or code-based attribution; inferred from spikes in attributed revenue without a control; used to claim causality from correlation (e.g., “sales went up after the post”). |

Micro/nano creators + performance measurement: why “small” can be easier to scale than “famous”

Micro/nano is a volume strategy. That means automation and tracking discipline matter more than your creative brainstorm doc.

Social Native reports nano-influencers hitting 10.3% engagement rates on TikTok and says 61% of brands primarily work with nano and micro-creators (Social Native). That’s not a guarantee of conversions, but it does explain why brands keep moving budget there.

And it’s easier to run 200 small tests than one celebrity bet—assuming your workflow doesn’t collapse.

Run the boring checks first. They catch the expensive problems.

Competitor map: the main platform archetypes (and what they’re optimized for)

I’m not going to rank tools. That’s a trap.

Instead, here are the archetypes—because the failure modes differ by archetype.

Comparison: A comparison table of archetypes (All-in-one suites; E-commerce influencer ROI platforms; Storefront/creator affiliate; Affiliate ops tools; Retail marketplace affiliate tools) with rows for: primary optimization, best-fit use case, analytics strength, attribution risk, and “what breaks first.” Avoid ranking vendors; optionally list example vendors in a muted ‘examples’ column.

| Dimension | All-in-one suites | E-commerce influencer ROI platforms | Storefront / creator affiliate | Affiliate ops tools | Retail marketplace affiliate tools | Examples (non-exhaustive) |

|---|---|---|---|---|---|---|

| Primary optimization | End-to-end throughput: discovery → outreach → workflow → reporting in one system. | Proving revenue impact: connect creator activity to sales/CAC/ROAS-style outcomes (often DTC-first). | Conversion surface area: make it easy for creators to sell via a shoppable page + links/codes. | Program plumbing: tracking, payouts, tax, fraud controls, partner management at scale. | Marketplace distribution: monetize within a retailer ecosystem; optimize for in-network conversion. | Upfluence; GRIN; CreatorIQ / Traackr (suite-like). Impact / Partnerize (performance + partnerships). ShopMy; LTK; Beacons (storefront). Rewardful; Refersion; Everflow (ops). Amazon Associates; Walmart Creator / affiliate programs (retail). |

| Best-fit use case | Teams that need a single workflow system for many creators/campaigns and can accept “good enough” attribution for speed. | Performance-led creator programs where finance/performance marketing needs an audit trail and repeatable measurement. | Creator-led commerce where the creator’s “shop” is the hub; strong for always-on affiliate + product seeding. | Brands with established demand who need reliable partner tracking, clean payouts, and scalable operations. | Brands prioritizing retail velocity (or creators who primarily drive sales inside a retailer) over owning the customer journey. | Suites: best when workflow complexity is the bottleneck. ROI platforms: best when measurement credibility is the bottleneck. Storefront: best when creator conversion UX is the bottleneck. Ops tools: best when payouts/compliance/fraud are the bottleneck. Retail tools: best when retailer channel is the bottleneck. |

| Analytics strength | Broad dashboards across the funnel; strong activity reporting; variable depth on incrementality and cross-channel stitching. | Stronger revenue/ROI framing; better for cohorting, creator-level efficiency, and performance narratives (still limited by identity/attribution inputs). | Strong on link/click/product-level conversion inside the storefront; weaker on full-funnel and multi-touch journeys. | Strong on deterministic tracking events (click → order), payouts, refunds, and partner-level reporting; less native “content performance” context. | Strong in-network conversion reporting; limited visibility into off-site influence and pre-retail discovery. | Look for: exportability, event-level logs, refund handling, and clear attribution rules—regardless of archetype. |

| Attribution risk | Medium–high: “one dashboard” can mask mixed methodologies; last-click bias and channel overlap can inflate perceived impact. | Medium: better instrumentation helps, but cross-platform journeys and view-through claims can still over-credit creators without incrementality controls. | High: heavy reliance on links/codes can reward checkout interception and undercount upper-funnel influence. | Medium: deterministic tracking is clean, but still typically last-click unless you layer rules, holdouts, or MMM/MTA. | High: retailer ecosystems often limit identity and multi-touch visibility; attribution can become “whatever the retailer reports.” | Common failure mode across all: paying for demand capture instead of demand creation. |

| What breaks first | Data trust: teams can’t reconcile platform numbers with GA/Shopify/ad platforms; disputes over “real” ROI slow scaling. | Attribution edge cases: cross-device, delayed conversions, and paid social/search overlap create uncomfortable “incrementality” questions. | Incentives: creators optimize for coupon/link conversion, not brand lift; commission costs rise as more partners compete for the same bottom-funnel traffic. | Partner quality control: fraud, self-referrals, and compliance issues increase as volume grows; ops becomes the bottleneck. | Measurement + ownership: limited customer data and restricted reporting make it hard to learn, retarget, or build durable first-party insights. | Stress-test questions: Can you audit events? Can you de-dupe? Can you run holdouts? Can you explain “why” not just “what”? |

All‑in‑one influencer + affiliate suites (discovery → workflow → payouts → dashboards)

These are the “do it all” platforms.

Upfluence is a clean example: creator discovery, marketplace applications, workflow management, promo codes, payments, and dashboards for revenue/ROI/AOV/commissions (Upfluence).

The upside: fewer moving parts.

The downside: you can end up trusting a single dashboard that’s doing a lot of interpretation behind the scenes.

That sounds fine until you read the terms and realize there’s a trap door. (Okay, not always “terms”—sometimes it’s just the attribution model.)

E‑commerce influencer platforms pushing “attributable ROI” (good promise, ask how it’s computed)

Aspire’s homepage literally leads with: “Attributable ROI… At last.” (Aspire)

Cool. Now ask:

- attributable to what model?

- with what lookback window?

- how do they dedupe code vs link?

- what happens when the user watches on one device and buys on another?

SalesHub’s cross-platform framing is the right mental model here: you’re trying to connect touchpoints across platforms, not crown a single winner (SalesHub).

If you disagree, I’m open to it—just show me what you’re measuring.

Creator affiliate + storefront platforms (conversion-focused landing pages as the ‘secret’)

Storefronts are not magic. They’re friction removal.

LoudCrowd positions “Creator Storefronts” as automated co-branded landing pages and claims they’re “the highest converting landing pages for any social traffic” (their words) alongside reporting/analytics and creator portals (LoudCrowd).

My operator take: storefronts can help because social traffic often lands cold and distracted. A co-branded page can reduce the “wait, where am I?” moment.

One more wrinkle: if your attribution is link-only and creators mostly drive “view then search,” storefronts won’t fix measurement. They just help conversion.

Affiliate ops tools (tracking + commissions + payouts) that can power the backend

Rewardful is the archetype here: Stripe integration, coupon code tracking, PayPal/Wise mass payouts, automated refund handling, self-referral fraud detection, and “last touch attribution” as a stated model (Rewardful).

Great for mechanics.

Not a creator marketplace by itself.

So before you change commissions, check the conversion path—otherwise you’re paying to mask a site problem.

Retail marketplace affiliate tools (Amazon/Walmart-focused) and why attribution gets weird fast

Upfluence mentions Amazon Attribution and payment integration for Amazon-focused influencer affiliate campaigns (Upfluence). Levanta positions itself as affiliate marketing software for sellers on Amazon and Walmart (Levanta).

Here’s the annoying part: retail marketplaces constrain customer data and attribution rules. You’ll be reconciling platform reporting with your own, and you won’t always like what you find.

If your top partner vanished next week, what would your revenue graph do?

AI matching: useful, but it’s not a substitute for performance evidence

AI matching is everywhere. And it can be genuinely helpful.

But it’s also a great way to scale mistakes.

TRIBE is refreshingly candid: its BrandMatch AI score is based on demographics and audience match, and it doesn’t consider average performance metrics or content quality/creativity (TRIBE). That limitation is the whole point. Demographic fit isn’t conversion.

Demographic fit scores vs performance predictors (don’t confuse them)

TRIBE describes BrandMatch AI as a percentage score for how precisely creator demographics/audience data match your campaign requirements (TRIBE).

That’s useful for avoiding obvious mismatch.

It’s not a performance predictor. Not by itself.

Social Native’s performance framing is closer to what you actually want: selecting creators based on predicted return, using historical conversion data and audience quality (Social Native).

Exactly.

Dataset advantage is real—so ask what data is proprietary vs scraped vs self-reported

LTK’s Match.AI announcement makes the “data moat” argument explicitly: it analyzes over 100 million data points and draws on 12+ years of creator/brand/shopper data (LTK).

And SoftBank’s Angela Du basically says the quiet part out loud: AI winners are positioned by access to vast, proprietary datasets (LTK).

Operator questions I’d ask anyway:

- What data feeds the model?

- How often does it refresh?

- Can I see why a creator was recommended (even a rough explanation)?

You don’t need more tactics; you need fewer unknowns.

Speed claims: “70% faster setup” is nice—until it ships bad matches at scale

Skeepers claims campaign setup time reduced by 70% via AI-powered invitations (Skeepers).

To be fair, speed matters.

But faster activation amplifies selection mistakes and tracking gaps. If you’re going to scale with AI matching, pair it with a holdout/test-and-scale process and a weekly tracking sanity check (SalesHub’s unified dashboard + UTMs + codes approach is a decent baseline) (SalesHub).

The win isn’t a spike. The win is a system you can trust next month.

Performance analytics checklist: what you should be able to answer in 30 minutes

This is my boring-but-effective audit section. Print it. Put it next to your coffee. (Out here in the Pacific Northwest, coffee is basically a project management tool.)

In 30 minutes, your platform should let you answer:

- Which creators are profitable after commissions/fees?

- What’s revenue, AOV, and payout status per creator?

- What’s the split of code vs link conversions?

- What’s time-to-conversion by platform (TikTok vs IG vs YouTube)?

- What attribution model is being used—and can you change it?

- Can you export raw rows (creator, post, link/code, order ID, timestamp)?

- How are refunds/chargebacks/reversals handled in ROI?

SalesHub’s recommendation set—unique identifiers, UTMs, promo codes, affiliate links, unified dashboard—is the minimum viable instrumentation (SalesHub). Upfluence’s dashboards (revenue/ROI/AOV/commissions) are an example of baseline reporting you should expect (Upfluence).

Don’t skip this step.

Creator-level profitability: revenue, commissions, AOV, and payout status in one view

Upfluence explicitly calls out dashboards for Revenue, ROI, AOV, and commissions owed, plus individual tracking links per influencer (Upfluence).

Rewardful emphasizes the portal/links/coupon codes and API-driven custom dashboards (Rewardful).

Insist on creator-level rollups. Campaign totals hide freeloaders.

Attribution model transparency: last-touch, linear, time-decay—pick one on purpose

SalesHub lays out linear and time-decay models in plain language (SalesHub). Metrics Watch summarizes GA4’s model options and notes GA4’s data-driven attribution approach (Metrics Watch).

My process:

- pick a model

- document it in a change log

- don’t compare performance across periods when the model changed (unless you enjoy telling yourself stories)

A lot of affiliate advice assumes you have perfect attribution. You don’t. Neither do I.

Cross-platform journeys: connect Instagram/TikTok/YouTube touchpoints to site/app conversion

SalesHub’s playbook is straightforward: UTMs + promo codes + affiliate links + unified dashboard (SalesHub).

That’s the baseline.

If you can’t do cross-platform, at least do cross-signal: link clicks + code redemptions + post timestamps + conversion timestamps. It’s not perfect, but it’s auditable.

Offline + QR + web-to-app: the attribution gap most influencer programs ignore

Linkrunner’s example is brutal (and familiar): offline spend shows up as “direct” installs, and you’re left guessing (Linkrunner).

Their fix is practical: campaign-specific links, UTM structure, deep link fallback behavior, and weekly validation workflows (Linkrunner).

If your influencer program touches QR, events, packaging, or web-to-app funnels, ask whether your hybrid platform supports this—or whether you need an MMP/link layer outside it.

Run the boring checks first. They catch the expensive problems.

Incentives and leakage: how “performance analytics” can lie to you

If you’ve ever stared at a reversal report at midnight, you’ll get it.

Attribution isn’t just math. It’s incentives.

SalesHub explains how last-click undervalues earlier influencer touchpoints (SalesHub). Impact.com frames multi-touch attribution as a core challenge in measuring influencer ROI (impact.com).

Here’s where last-click gravity quietly pulls the whole program off course.

You can build a dashboard that “proves” ROI while paying mostly for demand capture.

Codes vs links: when codes help measurement—and when they create a new mess

Codes are great when:

- links break (hello, app switching)

- users buy later on desktop

- you need a second measurement path

SalesHub recommends using UTMs plus promo codes in parallel (SalesHub). Upfluence emphasizes unique promo code generation compatible with major commerce platforms and Amazon (Upfluence).

✅ Pros

- Codes: survive app switching + cross-device journeys (people can type them later).

- Links: cleaner clickstream + landing-page context when the journey stays in one device/session.

- Codes: easy for creators to say/show; easy for shoppers to remember.

- Links: lower “did I enter it right?” friction (tap → cart) when UX is tight.

- Links: simpler to attribute when you can rely on a single identifier (click → session → order).

- Best practice: dual-track (code + link) gives redundancy and a fuller audit trail.

- Recommended default: issue both, then dedupe on purpose with a clear rule (e.g., link wins if present; otherwise code).

❌ Cons

- Codes: higher leakage risk (coupon sites/forums scrape and repost).

- Links: measurement breaks with cross-device/app switching (view on TikTok → buy later on desktop).

- Codes: user friction at checkout (remembering/typing; “where do I enter this?”).

- Links: can be blocked/stripped (in-app browsers, privacy settings, redirects, ad blockers).

- Dual-track: creates dedupe complexity (same order can match both code + link).

- Dual-track: without explicit rules, you’ll double-pay or mis-credit (and lose trust fast).

- Recommended default: don’t run “both” unless you’ve defined priority + lookback + tie-breakers and tested edge cases.

But codes create mess when:

- they leak into coupon sites

- they get shared in deal forums

- they become the primary reason someone buys (discount dependency)

Dual-track (link + code). Then dedupe on purpose.

Refunds, reversals, and fraud checks (if the platform can’t handle this, your ROI is fiction)

Rewardful calls out automated refund handling and self-referral fraud detection (Rewardful). That’s affiliate hygiene, and it matters because refunds can quietly inflate “ROI” if they’re not reconciled back to the creator.

Metrics Watch also flags fraud detection as part of what partnership attribution tools can include (via its Impact.com summary) (Metrics Watch).

If the platform can’t net out refunds and chargebacks in reporting, your ROI is fiction.

This is not a rounding error.

How to evaluate a platform: a stress-test scorecard (Mara’s version)

I realize I’m biased toward systems that are easy to audit.

Still, here’s the scorecard I use:

- Data access: raw export at order/event level?

- Attribution models: last-touch only, or options (linear/time-decay/data-driven)?

- Integrations: Shopify + analytics + CRM; can you pass UTMs/subIDs cleanly?

- Payout automation: mass payouts, multi-currency, tax/legal docs.

- Creator UX: portal, briefs, stats, “time to first post.”

- Rights + paid amplification readiness: can you reuse content cleanly?

- Support model: self-serve vs managed services; reporting transparency.

Upfluence positions “integrate your own marketing stack” and compatibility with Shopify/WooCommerce/Magento/BigCommerce/Amazon (Upfluence). Metrics Watch’s tool roundup is useful for thinking about attribution tooling outside the influencer platform itself (GA4, Impact.com, etc.) (Metrics Watch).

I’d rather you ship one clean test than ten messy partnerships you can’t explain.

Integration reality check: can it plug into your stack without duct tape?

Upfluence lists broad commerce compatibility and stack integrations (Upfluence). Metrics Watch notes GA4 has API access and integrates with Google tools, but can be weak on cross-device tracking (Metrics Watch).

So test:

- UTMs preserved end-to-end

- code/link dedupe rules

- export granularity

- postback/webhook options (if you’re serious)

Creator experience: portals, storefronts, and “time to first post”

LoudCrowd emphasizes a creator portal where creators manage storefronts, stats, commissions, and briefs (LoudCrowd). Skeepers emphasizes one-click onboarding/invitations for speed (Skeepers).

Creator UX is a performance lever. Fewer steps means more activation.

And yes—influencers change the math here—especially with codes.

Managed services vs self-serve: when outsourcing is actually the cheaper option

Brandbassador sells managed services with monthly reports and claims expertise across many brands (Brandbassador). Impact.com’s ROI framework is a good reminder that measurement discipline is part of the work, not an “extra” (impact.com).

If you don’t have ops bandwidth for approvals, creative QA, and reporting hygiene, managed services can be cheaper than hiring—if you can still audit the data.

If this feels strict, good—it means you’re building something that can survive volatility.

Implementation blueprint: launch a hybrid program without lying to yourself about attribution

I’m going to flatten this a bit, but the sequence matters.

Checklist

- ☐ GO/NO-GO: Step 1 — Decide the compensation model (and what it implies for attribution)

- ☐ Artifact: Comp model decision documented (CPS/CPA, flat fee, hybrid, bonuses)

- ☐ Artifact: Eligibility rules written (who qualifies, what counts as a conversion, exclusions)

- ☐ Artifact: Success metrics defined (primary + guardrails: CAC/ROAS, AOV, refund rate, new vs returning)

- ☐ GO/NO-GO: Step 2 — Lock the tracking schema before anyone posts

- ☐ Artifact: UTM / code / link schema finalized (naming conventions, required parameters, examples)

- ☐ Artifact: Dedupe rule defined (code vs link precedence, last-click vs assist handling, window)

- ☐ Artifact: Export test completed (raw events + orders + payouts reconcile; IDs join cleanly)

- ☐ GO/NO-GO: Step 3 — Put the audit trail on rails (so reporting doesn’t drift)

- ☐ Artifact: Weekly sanity checks scheduled (sample orders, top creators, anomaly thresholds, refunds/returns)

- ☐ Artifact: Change log created (owner + required fields: date, change, reason, impacted creators, effective date)

- ☐ Artifact: Change log fields agreed (schema/version, attribution rule changes, payout rule changes, tracking updates)

- ☐ FINAL GO/NO-GO: All artifacts stored in one place and linked from the program brief

Step 1: Decide what you’re paying for (discovery, persuasion, or checkout interception)

What behavior are you paying for—discovery, persuasion, or checkout interception?

Impact.com recommends hybrid compensation models (base fee + performance incentives) for long-term partnerships (impact.com). That’s usually the cleanest alignment when you want creators to invest in quality and outcomes.

Pay for value creation, not value capture.

Step 2: Set up tracking that survives the messy middle (UTMs, codes, dashboards, exports)

SalesHub’s minimum viable setup is the right starting point: unique identifiers, UTMs, promo codes, affiliate links, unified dashboard (SalesHub). Upfluence supports unique promo codes and campaign dashboards (revenue/ROI/AOV/commissions) (Upfluence).

Weekly sanity check:

- do clicks roughly align with sessions?

- do code redemptions align with creator posting cadence?

- are “direct/none” conversions spiking suspiciously?

Track it. Then verify it.

Step 3: Run a test you can audit (and write it down in a change log)

Use a small cohort. 2–4 weeks is usually enough to see signal (unless your sales cycle is long).

Document:

- attribution model + windows

- offer terms + exclusions

- creator cohort criteria

- creative constraints

- what “success” means (CPA/CAC proxy + contribution margin proxy)

Metrics Watch’s overview of attribution tools is a reminder that you may need GA4 or a dedicated partner attribution tool alongside your creator platform (Metrics Watch).

If you’re stuck, start with the funnel map and a change log. Future-you will thank you.

Long-term vs one-off: how hybrids support always-on programs (and when you shouldn’t)

Impact.com notes 63% of brands prefer sustained collaborations and frames long-term partnerships as better for CLV, cost efficiency, and predictability—while one-offs are useful for launches and testing (impact.com).

So before you build “always-on,” ask: do you have the workflow and tracking to support it?

Always-on creator programs: treat creators like ad sets (test, scale, rotate)

Social Native describes always-on activation and treating creator content as scalable assets, including paid amplification as a multiplier (Social Native). Impact.com’s long-term framework supports the idea that sustained partnerships can reduce CAC over time (impact.com).

Practical rule:

- test small

- scale winners

- rotate creative to avoid fatigue

- pause anything you can’t explain

Try it on one offer, one partner type, one traffic source. Then scale what holds up.

Common gaps competitors don’t answer well (so you should ask directly)

SalesHub and Linkrunner both make the same point from different angles: cross-platform attribution is hard, and “direct/organic” buckets swallow your signal when tracking breaks (SalesHub, Linkrunner).

TRIBE’s limitation note is the AI version of the same truth: models don’t measure everything you wish they measured (TRIBE).

So ask directly about:

- offline/QR/web-to-app support

- attribution model transparency

- refund/reversal handling

- export granularity

- incrementality testing options

- AI explainability (inputs + what’s excluded)

You don’t need more tactics; you need fewer unknowns.

The 12 questions I’d ask on a demo call (copy/paste)

- What attribution models do you support (last-touch, linear, time-decay, data-driven), and can we switch? (SalesHub, Metrics Watch)

- What are your default lookback windows for clicks and codes?

- How do you dedupe link + code when both exist?

- Can we export raw order-level rows (order ID, timestamp, creator ID, code/link, platform)?

- How do refunds/chargebacks flow back into creator ROI? (Rewardful)

- What fraud checks exist (self-referral, suspicious patterns)? (Rewardful)

- Can we pass subIDs/UTMs cleanly into our analytics stack?

- What native integrations exist for our commerce platform (Shopify/Woo/etc.)? (Upfluence)

- Do you support Amazon Attribution / marketplace constraints, and what data do we not get? (Upfluence)

- What does “ROI” mean in your dashboard—ROI or ROAS? (Show formula.) (impact.com)

- What data powers AI matching, and what does it explicitly not consider? (TRIBE)

- How fast can a creator go from invite → approved → first post, and what’s the drop-off?

If they can’t answer these cleanly, that’s your answer.

Bottom line: pick the platform that matches your failure modes, not your wishlist

Social Native’s framing is the north star: creator programs are being held to CAC/ROAS/revenue contribution standards, enabled by better integrations and real-time conversion visibility (Social Native). SalesHub’s framing is the operational reality: cross-platform attribution is the difference between “influencer works” and “we can prove what it did” (SalesHub).

So pick based on what breaks in your world:

- If you’re drowning in creator volume: workflow + payouts + portal UX matter most.

- If finance is breathing down your neck: exports + refunds + model transparency matter most.

- If your journeys are cross-device/offline/web-to-app: you need a link/MMP layer that won’t collapse.

Start with one clean test. Validate tracking weekly. Scale only what holds up.

The win isn’t a spike. The win is a system you can trust next month.

Sources

- Social Native — Creator Performance Marketing: A 2026 Playbook for Scalable ROI

- SalesHub — Cross-Platform Attribution: Track Every Influencer Touchpoint That Drives Your Sales

- Upfluence — Influencer & Affiliate Marketing Platform

- impact.com — The ROI of long-term creator partnerships vs one-off

- Rewardful — Affiliate Software for SaaS

- LoudCrowd — Creator Affiliate Marketing Programs

- TRIBE — BrandMatch AI

- Skeepers — Using AI to Connect Your Brand with the Right Influencers

- LTK — Introducing LTK Match.AI

- Metrics Watch — Best Tools for Cross-Channel Attribution in 2025

- Linkrunner — Tracking QR Codes, Offline Ads, and Web-to-App Journeys

- Levanta — Amazon and Walmart Affiliate Marketing Software for Sellers