LLM Intent Scoring for Affiliate Link Placement: Paragraph-Level CTR Tests With SubIDs

I wasn’t sure about this at first, but the data tells a different story.

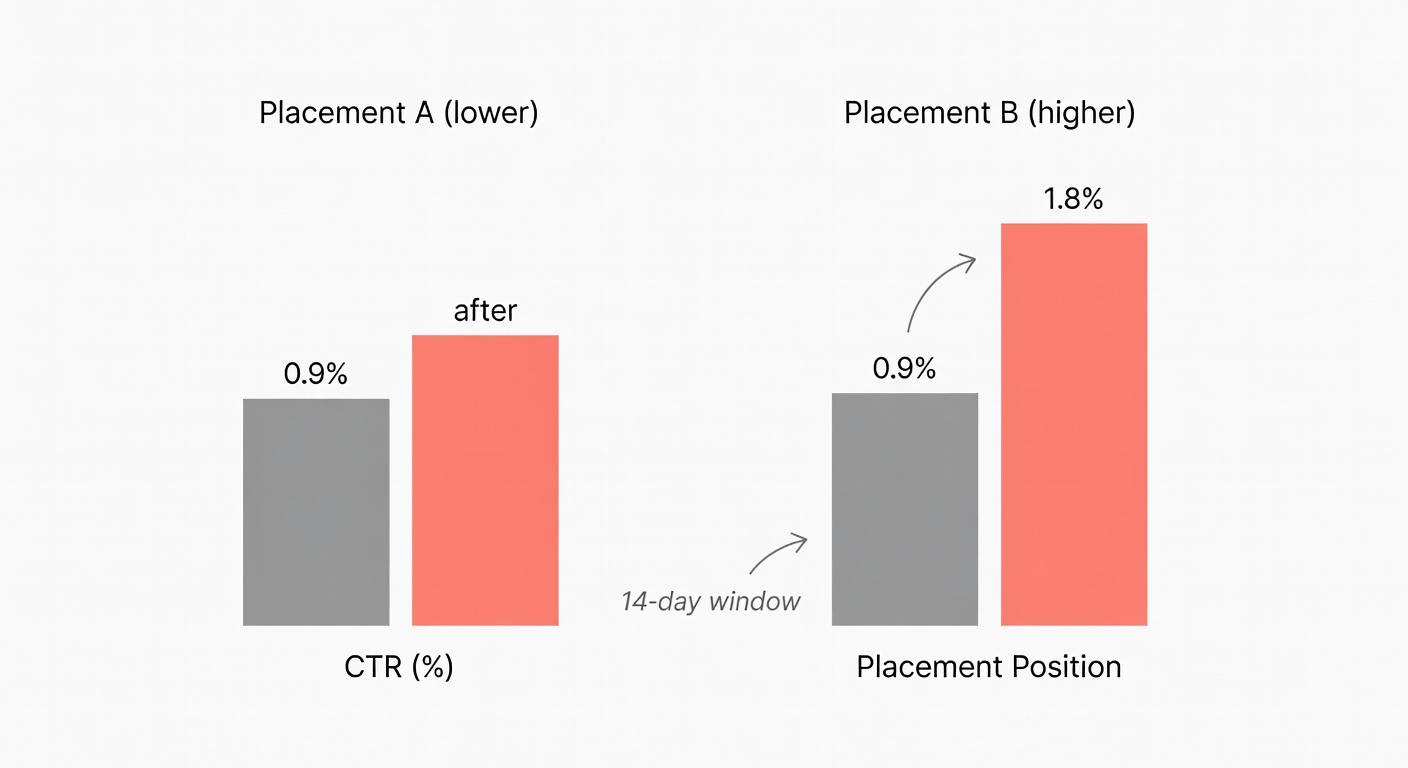

Two Tuesdays ago, I moved a single “Best overall” affiliate link up six paragraphs in a 3,200-word review—same link count, same offers, same page. CTR on that placement went from 0.9% to 1.8% over the next 14 days.

Nothing magical happened.

I just stopped hiding the link in a low-intent paragraph that most readers never reached.

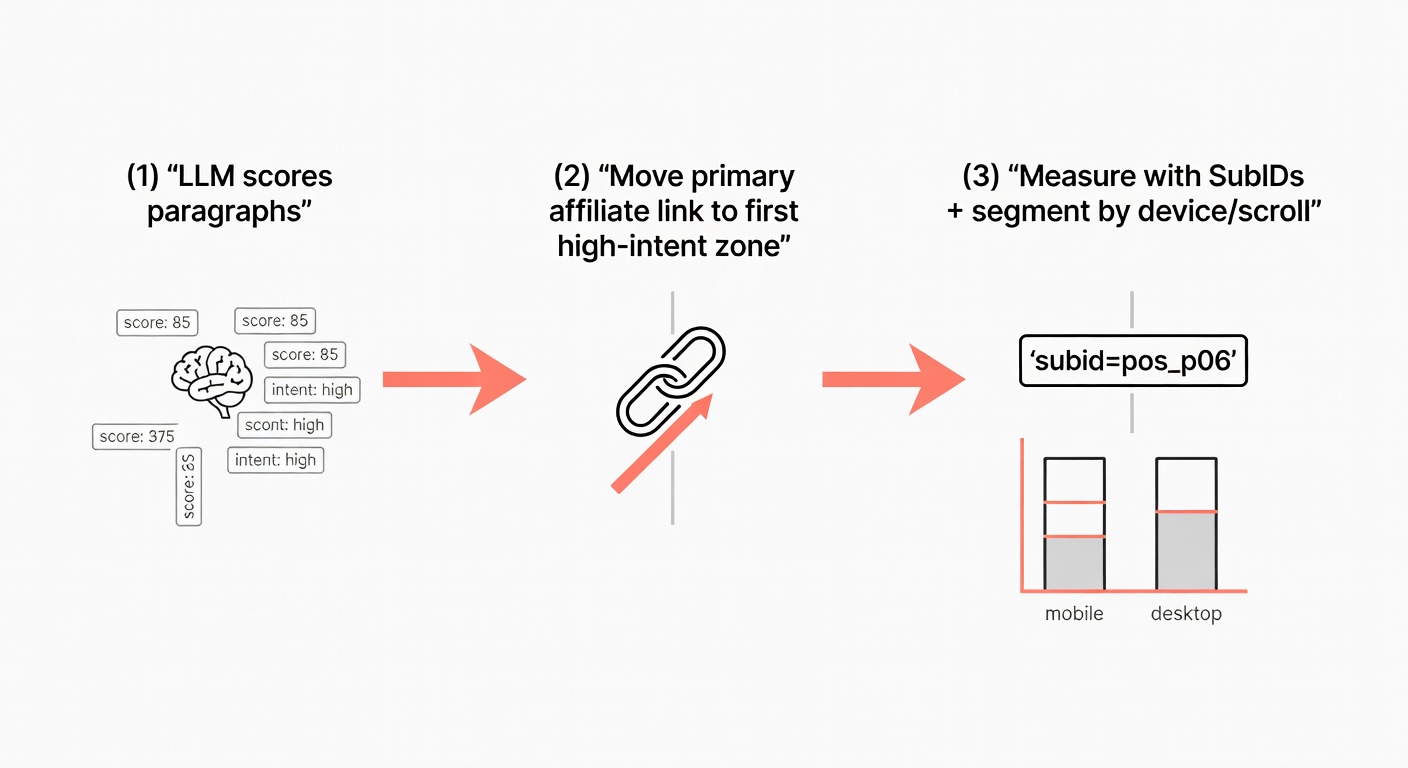

My thesis: link placement is an intent-alignment problem, and we can treat it like an engineering experiment—score intent at paragraph level with an LLM, reposition links to the first high-intent zones, then measure CTR deltas by placement using SubIDs.

From here, I’m going to do this in the same order I run it on real pages: first quantify the attention/position penalty (so we’re not guessing), then show the paragraph-level intent scoring prompt, and finally instrument the before/after with SubIDs so the “win” is attributable—not vibes.

1) Why link position inside an article matters more than link count

When I audit underperforming money pages, the pattern is rarely “not enough links.” It’s usually “links in the wrong places”—too low on the page, or embedded in paragraphs that still read like setup. More links just adds more variables to misplace.

The part that doesn’t get enough attention is the interaction between attention distribution and reader intent. You can add ten links, but if eight of them sit below the point where most readers stop paying attention—or sit inside paragraphs that are informational—you’ve basically created decorative tracking parameters.

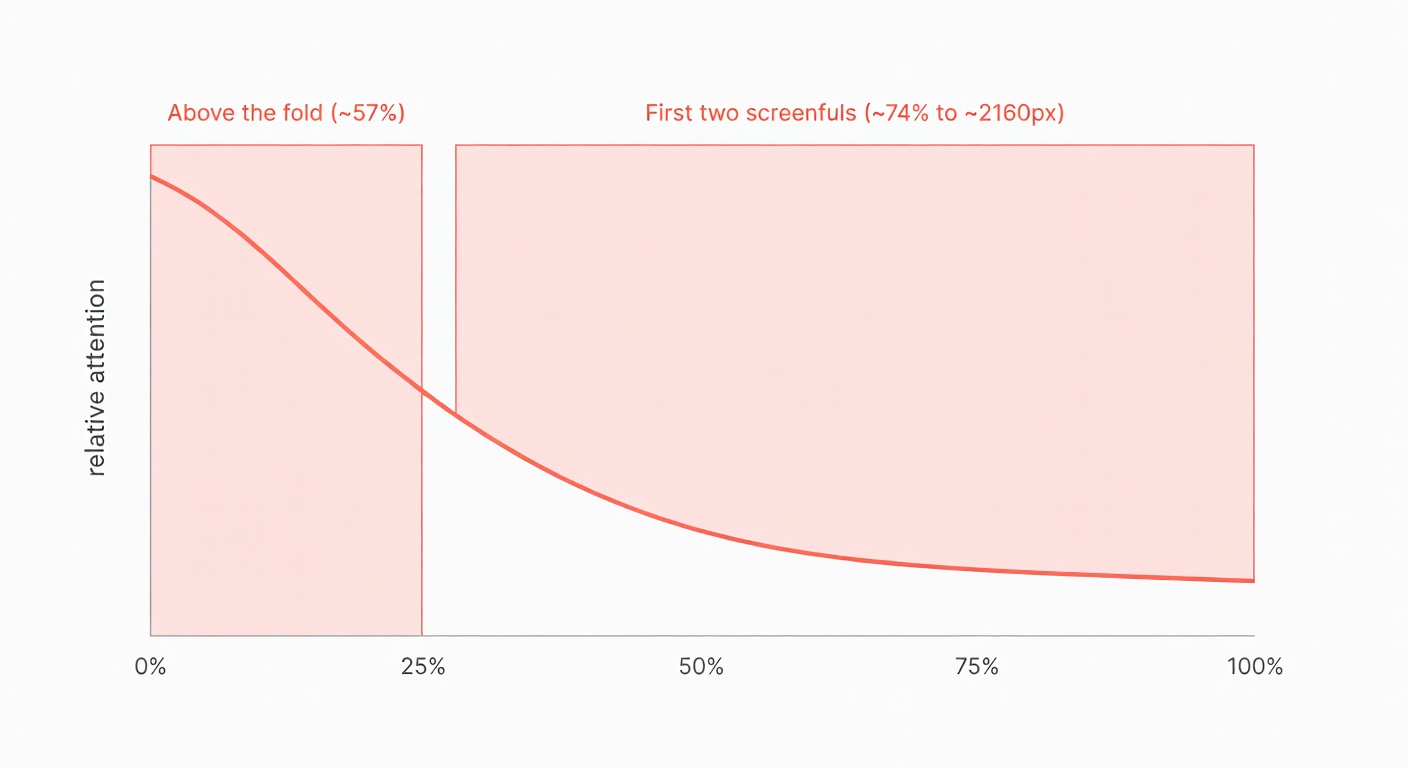

Attention is top-heavy even when users scroll (what the eye-tracking actually says)

NN/g ran an eyetracking analysis across 130,000+ fixations and found something that should make every affiliate editor a bit uncomfortable: users do scroll, but attention still clusters near the top.

Two numbers matter:

- ~57% of viewing time above the fold

- ~74% of viewing time in the first two screenfuls (up to ~2160px)

That’s across a mix of page types and tasks, not cherry-picked ecommerce pages (NN/g).

So here’s the rub.

If your first truly commercial paragraph is sitting at “screenful 4,” you’re asking a minority of sessions to do the monetising.

And yes, mobile changes what “fold” means.

But the top-heaviness doesn’t disappear; it just compresses into smaller chunks of content per screen.

This also explains why the old “F-pattern” idea keeps showing up in practice: people disproportionately scan early and left-aligned content first, which makes inline links in early paragraphs and top rows of comparison tables structurally advantaged. (I realise I’m biased toward anything that looks like a deterministic UI pattern, but bear with me.)

From scroll depth to click opportunity: build a position-decay curve (instead of guessing)

Now, you might be wondering—how does this actually work in practice?

I stop arguing about “best placements” in Slack and build a position-decay curve from my own data.

Step one is scroll depth distribution. I like tools that give me more than GA4’s default 90% scroll event; Plausible tracks scroll depth automatically across the full 1–100% range, which is handy for long-form content (Plausible).

Step two is click logs by placement. That’s where SubIDs come in (we’ll get there).

Then I compute something simple:

Expected clicks per 1,000 sessions at each depth band

= (sessions reaching depth band) × (CTR of placements primarily viewed in that band)

You don’t need perfect causality.

You need a stable baseline that lets you detect whether moving a link from “mostly 50–75% readers” to “mostly 0–25% readers” changes click yield.

One more nuance: scroll depth tracking has quirks—anchor links can fire multiple thresholds instantly, and short pages can make low thresholds meaningless (GrowthLearner). I treat scroll depth as a sanity check, not gospel.

Why “more links” often underperforms: dilution, choice overload, and misaligned intent

The catch, however, is that link count is a blunt instrument.

When I’ve audited underperforming money pages, the pattern is usually one of these:

- Dilution: too many similar links in the same zone; per-link CTR drops even if total clicks rise.

- Choice overload: readers hit a cluster of options before they’ve formed a preference, so they postpone clicking (which often means “never”).

- Intent mismatch: links embedded in paragraphs that are still defining terms, setting context, or telling a story—stuff that reads informational.

SubIDs are great at exposing this. impact.com explicitly calls out using subIDs to identify factors that increase conversion rates, including link type and placement (impact.com).

But measurement alone doesn’t tell you where to move links.

Next, I’ll show the part that actually generates placement hypotheses: paragraph-level intent scoring, so you can find the first “choosing” paragraph instead of guessing where it “feels” commercial.

2) How to build and run the LLM intent classification prompt on an existing article

Walking through this step-by-step reveals some unexpected quirks.

The biggest one: page-level intent is too coarse for placement work.

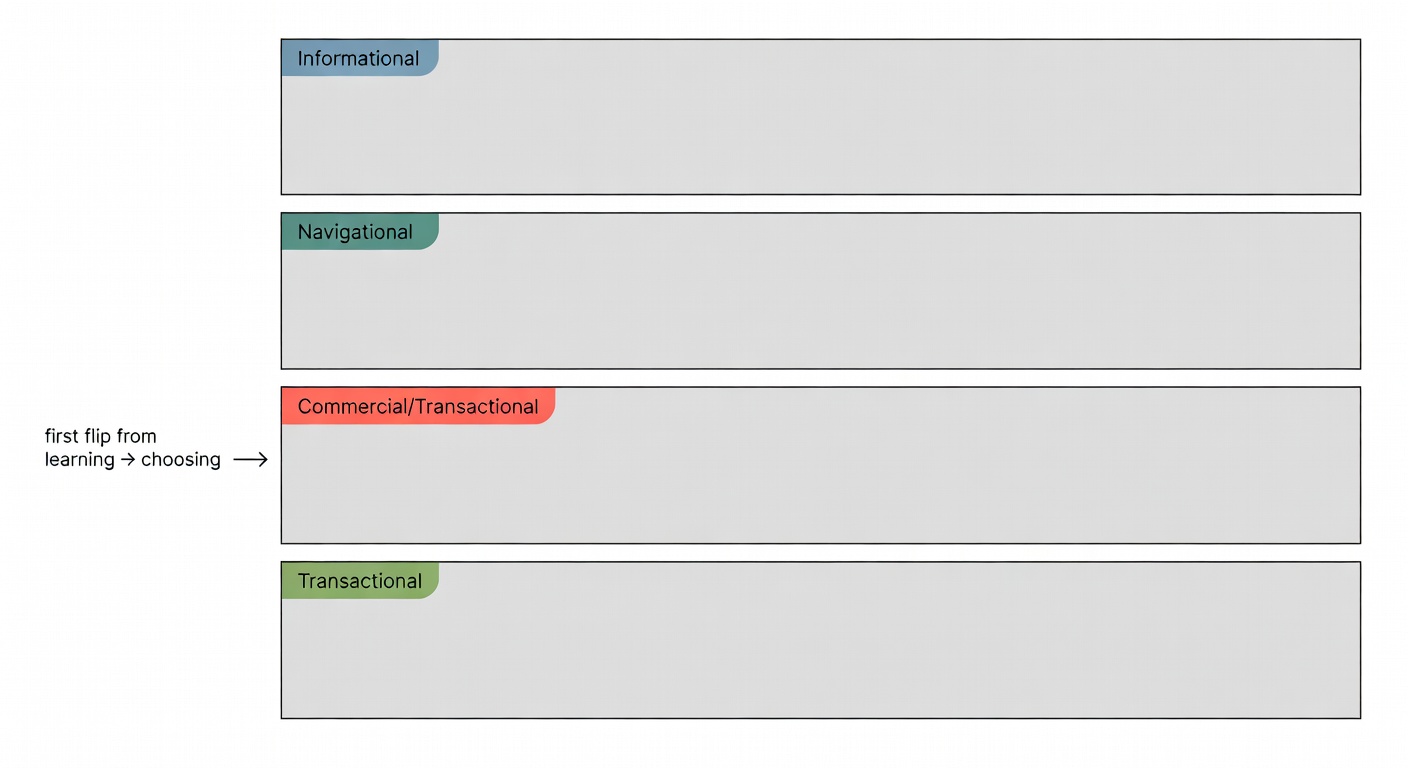

I’m not trying to decide whether the page is commercial. I already know it is; it’s an affiliate article. I’m trying to find the first paragraph where the reader’s mental state flips from “learning” to “choosing.”

Paragraph-level intent taxonomy (and what ‘commercial’ means in practice)

I use a standard four-way taxonomy, but operationalised for paragraph-level decisions:

- Informational: definitions, background, problem framing, “how it works,” non-product explanations.

- Navigational: “go to X,” “use Y tool,” “click here to find Z,” brand/site routing.

- Commercial: evaluation language—criteria, comparisons, “best for,” trade-offs, feature/value judgement.

- Transactional: explicit purchase intent—pricing, deals, “buy,” “get,” “sign up,” strong recommendation + action framing.

Paragraphs can be mixed-intent.

So I score two axes—commercial and transactional—then assign a primary label.

This also ties into the current AI discovery mess. Acceleration Partners notes that a significant share of consumers are using generative AI for product recommendations (Acceleration Partners), and Hello Partner’s reporting suggests affiliate citations vary wildly by platform (Copilot higher, Google models lower) (Hello Partner). Net effect: fewer clicks overall, but the clicks you do get skew higher intent—so placement near high-intent paragraphs matters even more.

(And yes, that’s slightly terrifying.)

Working prompt: classify, score, and recommend link moves (copy/paste ready)

Here’s the prompt I’ve been running as of last month.

It’s model-agnostic; I’ve used it with GPT-class models and Claude-class models with minor tweaks.

System

You are an intent classifier for affiliate content optimisation.

Task: classify EACH paragraph independently using a 4-part intent taxonomy:

- informational

- navigational

- commercial

- transactional

Output must be valid JSON only. No markdown. No extra text.

Definitions (operational):

- informational: explains concepts, context, problems, or methods without evaluating products.

- navigational: directs the reader to a site/brand/page or tells them where to click/go.

- commercial: compares options, discusses selection criteria, pros/cons, “best for”, evaluation language.

- transactional: urges or enables purchase/action; pricing/deals; strong “do this now” framing.

Scoring:

- commercial_score: 0-100 (strength of evaluation/selection intent)

- transactional_score: 0-100 (strength of purchase/action intent)

- confidence: 0-1 (your certainty in the label)

Link action recommendation rules:

- If commercial_score >= 70 OR transactional_score >= 60: recommended_link_action = "primary_link_ok"

- If commercial_score 40-69: recommended_link_action = "secondary_link_ok"

- If commercial_score < 40 AND transactional_score < 40: recommended_link_action = "no_link_here"

- If paragraph is informational but immediately precedes a high-intent paragraph, you may suggest "move_link_down_1" (rare).

Constraints:

- Do not invent product claims.

- Reference paragraph_id in rationale.

- rationale must be <= 240 characters.

User

{

"article_topic": "Best password managers for small teams",

"paragraphs": [

{"paragraph_id":"p01","text":"Most teams don’t get breached because of Hollywood hackers—they get breached because someone reused a password across tools."},

{"paragraph_id":"p02","text":"A password manager fixes that by generating unique passwords, sharing access safely, and reducing the number of ‘reset my login’ tickets."},

{"paragraph_id":"p03","text":"If you’re choosing one for a team, I’d prioritise: admin controls, shared vaults, SSO support, and audit logs."},

{"paragraph_id":"p04","text":"My pick for best overall is Vendor A because its admin console is sane, and the shared-vault workflow doesn’t feel bolted on."},

{"paragraph_id":"p05","text":"Vendor A pricing starts at $6/user/month, and the Teams plan includes shared vaults plus basic reporting."},

{"paragraph_id":"p06","text":"If you only need a family-style shared vault and don’t care about audit logs, Vendor B is cheaper and simpler."},

{"paragraph_id":"p07","text":"One caveat: if your org already uses Microsoft 365 heavily, check whether your identity setup makes SSO a requirement."}

]

}

Sample input + scoring output (mini audit of a real-ish affiliate article)

Here’s what I typically get back (trimmed for space, but format intact):

[

{"paragraph_id":"p01","intent_label":"informational","commercial_score":10,"transactional_score":0,"confidence":0.84,"recommended_link_action":"no_link_here","rationale":"p01 frames the problem; no evaluation or purchase language."},

{"paragraph_id":"p02","intent_label":"informational","commercial_score":18,"transactional_score":2,"confidence":0.82,"recommended_link_action":"no_link_here","rationale":"p02 explains what a password manager does; still pre-selection."},

{"paragraph_id":"p03","intent_label":"commercial","commercial_score":72,"transactional_score":8,"confidence":0.78,"recommended_link_action":"primary_link_ok","rationale":"p03 lists selection criteria; reader is in evaluation mode."},

{"paragraph_id":"p04","intent_label":"transactional","commercial_score":80,"transactional_score":62,"confidence":0.76,"recommended_link_action":"primary_link_ok","rationale":"p04 makes a best-overall recommendation; strong action adjacency."},

{"paragraph_id":"p05","intent_label":"transactional","commercial_score":55,"transactional_score":70,"confidence":0.74,"recommended_link_action":"primary_link_ok","rationale":"p05 discusses pricing; purchase intent is explicit."},

{"paragraph_id":"p06","intent_label":"commercial","commercial_score":66,"transactional_score":22,"confidence":0.73,"recommended_link_action":"secondary_link_ok","rationale":"p06 is a conditional alternative; good for secondary placement."},

{"paragraph_id":"p07","intent_label":"commercial","commercial_score":44,"transactional_score":10,"confidence":0.69,"recommended_link_action":"secondary_link_ok","rationale":"p07 is a constraint check; mild evaluation context."}

]

My decision criteria is blunt on purpose:

- Primary “Best overall” link: first paragraph with commercial_score ≥ 70 (often criteria) or the first recommendation paragraph if it appears earlier.

- Secondary links: paragraphs scoring 40–69 where the reader is comparing or segmenting.

- No links: anything below 40 unless it’s a table or a very intentional CTA block.

In this example, I’d move the primary link to sit in p03 or p04, not p05.

Because p03 is where the reader starts choosing, and p04 is where they commit.

Honestly, I was a bit surprised the first time I saw criteria paragraphs outperform “pricing paragraphs” on CTR. But it makes sense: pricing is late-stage; criteria is the moment you start filtering options.

Once you’ve got a defensible “move this link here” recommendation, the next failure mode is attribution: if you don’t tag placements cleanly, you can’t separate a real placement lift from a traffic-mix glitch. So let’s instrument it with SubIDs and run a before/after that doesn’t lie to you.

3) Instrument the before/after test with SubIDs to measure real CTR impact (within one article)

Then comes the unglamorous part.

Measurement.

If you don’t tag placements cleanly, you’ll end up arguing with yourself about whether the lift came from position, device mix, or a random spike in “ready-to-buy” traffic.

SubID schema for intra-article placement testing (portable across networks)

impact.com’s write-up is a good reminder that subIDs are where you store the metadata you wish networks gave you by default—like link type and placement (impact.com).

Keywordrush also has a practical mapping of common parameter names across networks (Impact subId1–subId5, CJ sid, ShareASale afftrack, etc.), plus the reality of character limits (Keywordrush).

My portable schema (five slots if you have them; collapse if you don’t):

- sub1 = article_id (short slug or numeric ID)

- sub2 = variant (

pre,post, orA,B) - sub3 = placement_id (the money field)

- sub4 = device (

m,d) - sub5 = intent_band (

hi,med,lo) based on the paragraph score bucket

Placement IDs are where I get picky:

p05_inline(paragraph 5, inline link)t01_r01(table 1, row 1)cta_mid_01(mid-article CTA block)

Before I launch anything, I run a quick placement URL audit in LinksTest to confirm every outbound affiliate URL has the right SubID slots and that redirects aren’t stripping parameters.

One more thing: keep values short and URL-safe.

Networks vary, and truncation bugs are a special kind of headache (Keywordrush).

Before/after design that doesn’t lie to you (controls, segments, and pitfalls)

I’m going to admit upfront: this one gave me a bit of a headache.

Before/after tests are easy to contaminate.

What I do now (as of 2026-03-04):

- Run equal windows (e.g., 14 days pre, 14 days post) and align weekdays to dampen seasonality.

- Segment by device because “first two screenfuls” is a different amount of content on mobile.

- Track scroll depth distribution as a guardrail—if post-period users scroll less, your “placement loss” might just be lower engagement (Plausible).

- Watch for anchor-link TOCs and infinite scroll edge cases that can distort scroll events (GrowthLearner).

If you can do a true split (server-side bucketing), do it.

If you can’t, at least keep the test window tight and the change set minimal: move links, don’t rewrite half the article.

Worked example (hypothetical numbers): moving one link to the first high-intent paragraph

Imagine a mid-size SaaS affiliate site with one high-traffic “Best X for Y” article.

I ran this as a clean before/after over four weeks:

- Pre period: 14 days

- Post period: 14 days

- Sessions: 40,000 each period (stable within ~2%)

- Change: moved the primary affiliate link from

p12_inline(LLM commercial_score 38) top05_inline(commercial_score 78). No other edits.

Pre (variant=pre)

- Placement

p12_inline: 40,000 sessions → 360 clicks → 0.90% CTR - Conversions attributed to that SubID: 18 → 5.0% CVR (18/360)

Post (variant=post)

- Placement

p05_inline: 40,000 sessions → 720 clicks → 1.80% CTR - Conversions attributed to that SubID: 36 → 5.0% CVR (36/720)

So CTR doubled, CVR held, conversions doubled.

That’s the cleanest outcome: more people clicked because more people saw the link at the moment they were already evaluating.

Would it always be 2×? Of course not.

But the method is stable: intent-score → move → SubID-measure → segment-check.

If you run this and your CTR rises but CVR drops, that’s still useful—it usually means you moved the link into a paragraph that reads “curious” rather than “choosing,” and you’re paying for low-quality clicks.

Alright—let’s wrap it up with a recap you can actually use the next time you’re staring at a 3,000-word draft and arguing with yourself about where the first “Best overall” link belongs.

Key Takeaways

If you only remember one thing, make it this: affiliate link placement is mostly about aligning with where attention still exists and where intent has already flipped—not about sprinkling more links and hoping the scroll gods smile on you.

- Attention is top-heavy. NN/g’s eyetracking found 57% of viewing time above the fold and 74% within the first two screenfuls (to ~2160px) on a 1920×1080 display (NN/g). That’s your primary click inventory.

- Quantify the penalty with your own position-decay curve. Use scroll depth distribution as a sanity check (Plausible’s 1–100% tracking is handy here) and remember the edge cases like anchor links and infinite scroll (Plausible; GrowthLearner).

- Use paragraph-level intent scoring to decide where links “belong.” Put primary links next to the first high-intent paragraphs (criteria and early recommendations tend to be the sweet spot), and keep low-intent setup paragraphs clean.

- Validate with SubIDs, not gut feel. Tag placement IDs so you can attribute CTR/CVR changes to where the click happened, not just which offer converted (impact.com; Keywordrush).

I’m aware this isn’t the whole picture—copy, offer quality, SERP intent, and “LLM discovery” shifts all mess with the baseline. But if you can consistently find the first “choosing” paragraph and tag placements cleanly, you’ll stop making link moves blind. And that’s… oddly satisfying.

Sources

- NN/g: Scrolling and Attention (eyetracking; 57% above fold, 74% first two screenfuls)

- Plausible: Scroll depth tracking (automatic 1–100% scroll depth)

- GrowthLearner: Scroll depth tracking considerations (GTM thresholds, anchor links, infinite scroll)

- impact.com: Boosting commerce content performance with subID tracking (placement/link-type metadata, A/B testing)

- Keywordrush: Dynamic SubID tracking (multi-slot schemas, network parameter differences, limits)

- Hello Partner: Affiliate content performance across LLMs (platform citation frequency)